For a while now, I have been looking for a sensible offsite backup

solution for use at home. My requirements are simple, it must be

cheap and locally encrypted (in other words, I keep the encryption

keys, the storage provider do not have access to my private files).

One idea me and my friends had many years ago, before the cloud

storage providers showed up, was to use Google mail as storage,

writing a Linux block device storing blocks as emails in the mail

service provided by Google, and thus get heaps of free space. On top

of this one can add encryption, RAID and volume management to have

lots of (fairly slow, I admit that) cheap and encrypted storage. But

I never found time to implement such system. But the last few weeks I

have looked at a system called

S3QL, a locally

mounted network backed file system with the features I need.

S3QL is a fuse file system with a local cache and cloud storage,

handling several different storage providers, any with Amazon S3,

Google Drive or OpenStack API. There are heaps of such storage

providers. S3QL can also use a local directory as storage, which

combined with sshfs allow for file storage on any ssh server. S3QL

include support for encryption, compression, de-duplication, snapshots

and immutable file systems, allowing me to mount the remote storage as

a local mount point, look at and use the files as if they were local,

while the content is stored in the cloud as well. This allow me to

have a backup that should survive fire. The file system can not be

shared between several machines at the same time, as only one can

mount it at the time, but any machine with the encryption key and

access to the storage service can mount it if it is unmounted.

It is simple to use. I'm using it on Debian Wheezy, where the

package is included already. So to get started, run

apt-get

install s3ql. Next, pick a storage provider. I ended up picking

Greenqloud, after reading their nice recipe on

how

to use S3QL with their Amazon S3 service, because I trust the laws

in Iceland more than those in USA when it come to keeping my personal

data safe and private, and thus would rather spend money on a company

in Iceland. Another nice recipe is available from the article

S3QL

Filesystem for HPC Storage by Jeff Layton in the HPC section of

Admin magazine. When the provider is picked, figure out how to get

the API key needed to connect to the storage API. With Greencloud,

the key did not show up until I had added payment details to my

account.

Armed with the API access details, it is time to create the file

system. First, create a new bucket in the cloud. This bucket is the

file system storage area. I picked a bucket name reflecting the

machine that was going to store data there, but any name will do.

I'll refer to it as

bucket-name below. In addition, one need

the API login and password, and a locally created password. Store it

all in ~root/.s3ql/authinfo2 like this:

[s3c]

storage-url: s3c://s.greenqloud.com:443/bucket-name

backend-login: API-login

backend-password: API-password

fs-passphrase: local-password

I create my local passphrase using

pwget 50 or similar,

but any sensible way to create a fairly random password should do it.

Armed with these details, it is now time to run mkfs, entering the API

details and password to create it:

# mkdir -m 700 /var/lib/s3ql-cache

# mkfs.s3ql --cachedir /var/lib/s3ql-cache --authfile /root/.s3ql/authinfo2 \

--ssl s3c://s.greenqloud.com:443/bucket-name

Enter backend login:

Enter backend password:

Before using S3QL, make sure to read the user's guide, especially

the 'Important Rules to Avoid Loosing Data' section.

Enter encryption password:

Confirm encryption password:

Generating random encryption key...

Creating metadata tables...

Dumping metadata...

..objects..

..blocks..

..inodes..

..inode_blocks..

..symlink_targets..

..names..

..contents..

..ext_attributes..

Compressing and uploading metadata...

Wrote 0.00 MB of compressed metadata.

#

The next step is mounting the file system to make the storage available.

# mount.s3ql --cachedir /var/lib/s3ql-cache --authfile /root/.s3ql/authinfo2 \

--ssl --allow-root s3c://s.greenqloud.com:443/bucket-name /s3ql

Using 4 upload threads.

Downloading and decompressing metadata...

Reading metadata...

..objects..

..blocks..

..inodes..

..inode_blocks..

..symlink_targets..

..names..

..contents..

..ext_attributes..

Mounting filesystem...

# df -h /s3ql

Filesystem Size Used Avail Use% Mounted on

s3c://s.greenqloud.com:443/bucket-name 1.0T 0 1.0T 0% /s3ql

#

The file system is now ready for use. I use rsync to store my

backups in it, and as the metadata used by rsync is downloaded at

mount time, no network traffic (and storage cost) is triggered by

running rsync. To unmount, one should not use the normal umount

command, as this will not flush the cache to the cloud storage, but

instead running the umount.s3ql command like this:

# umount.s3ql /s3ql

#

There is a fsck command available to check the file system and

correct any problems detected. This can be used if the local server

crashes while the file system is mounted, to reset the "already

mounted" flag. This is what it look like when processing a working

file system:

# fsck.s3ql --force --ssl s3c://s.greenqloud.com:443/bucket-name

Using cached metadata.

File system seems clean, checking anyway.

Checking DB integrity...

Creating temporary extra indices...

Checking lost+found...

Checking cached objects...

Checking names (refcounts)...

Checking contents (names)...

Checking contents (inodes)...

Checking contents (parent inodes)...

Checking objects (reference counts)...

Checking objects (backend)...

..processed 5000 objects so far..

..processed 10000 objects so far..

..processed 15000 objects so far..

Checking objects (sizes)...

Checking blocks (referenced objects)...

Checking blocks (refcounts)...

Checking inode-block mapping (blocks)...

Checking inode-block mapping (inodes)...

Checking inodes (refcounts)...

Checking inodes (sizes)...

Checking extended attributes (names)...

Checking extended attributes (inodes)...

Checking symlinks (inodes)...

Checking directory reachability...

Checking unix conventions...

Checking referential integrity...

Dropping temporary indices...

Backing up old metadata...

Dumping metadata...

..objects..

..blocks..

..inodes..

..inode_blocks..

..symlink_targets..

..names..

..contents..

..ext_attributes..

Compressing and uploading metadata...

Wrote 0.89 MB of compressed metadata.

#

Thanks to the cache, working on files that fit in the cache is very

quick, about the same speed as local file access. Uploading large

amount of data is to me limited by the bandwidth out of and into my

house. Uploading 685 MiB with a 100 MiB cache gave me 305 kiB/s,

which is very close to my upload speed, and downloading the same

Debian installation ISO gave me 610 kiB/s, close to my download speed.

Both were measured using

dd. So for me, the bottleneck is my

network, not the file system code. I do not know what a good cache

size would be, but suspect that the cache should e larger than your

working set.

I mentioned that only one machine can mount the file system at the

time. If another machine try, it is told that the file system is

busy:

# mount.s3ql --cachedir /var/lib/s3ql-cache --authfile /root/.s3ql/authinfo2 \

--ssl --allow-root s3c://s.greenqloud.com:443/bucket-name /s3ql

Using 8 upload threads.

Backend reports that fs is still mounted elsewhere, aborting.

#

The file content is uploaded when the cache is full, while the

metadata is uploaded once every 24 hour by default. To ensure the

file system content is flushed to the cloud, one can either umount the

file system, or ask S3QL to flush the cache and metadata using

s3qlctrl:

# s3qlctrl upload-meta /s3ql

# s3qlctrl flushcache /s3ql

#

If you are curious about how much space your data uses in the

cloud, and how much compression and deduplication cut down on the

storage usage, you can use s3qlstat on the mounted file system to get

a report:

# s3qlstat /s3ql

Directory entries: 9141

Inodes: 9143

Data blocks: 8851

Total data size: 22049.38 MB

After de-duplication: 21955.46 MB (99.57% of total)

After compression: 21877.28 MB (99.22% of total, 99.64% of de-duplicated)

Database size: 2.39 MB (uncompressed)

(some values do not take into account not-yet-uploaded dirty blocks in cache)

#

I mentioned earlier that there are several possible suppliers of

storage. I did not try to locate them all, but am aware of at least

Greenqloud,

Google Drive,

Amazon S3 web serivces,

Rackspace and

Crowncloud. The latter even

accept payment in Bitcoin. Pick one that suit your need. Some of

them provide several GiB of free storage, but the prize models are

quite different and you will have to figure out what suits you

best.

While researching this blog post, I had a look at research papers

and posters discussing the S3QL file system. There are several, which

told me that the file system is getting a critical check by the

science community and increased my confidence in using it. One nice

poster is titled

"

An

Innovative Parallel Cloud Storage System using OpenStack s SwiftObject

Store and Transformative Parallel I/O Approach" by Hsing-Bung

Chen, Benjamin McClelland, David Sherrill, Alfred Torrez, Parks Fields

and Pamela Smith. Please have a look.

Given my problems with different file systems earlier, I decided to

check out the mounted S3QL file system to see if it would be usable as

a home directory (in other word, that it provided POSIX semantics when

it come to locking and umask handling etc). Running

my

test code to check file system semantics, I was happy to discover that

no error was found. So the file system can be used for home

directories, if one chooses to do so.

If you do not want a locally file system, and want something that

work without the Linux fuse file system, I would like to mention the

Tarsnap service, which also

provide locally encrypted backup using a command line client. It have

a nicer access control system, where one can split out read and write

access, allowing some systems to write to the backup and others to

only read from it.

As usual, if you use Bitcoin and want to show your support of my

activities, please send Bitcoin donations to my address

15oWEoG9dUPovwmUL9KWAnYRtNJEkP1u1b.

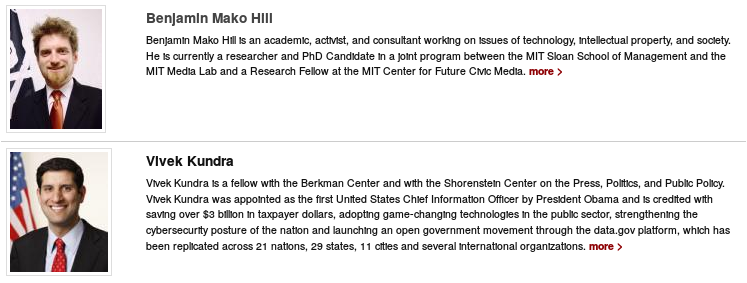

A friend recently commented on my rather unusual portrait on my (out of date) page on the Berkman website. Here s the story.

I joined Berkman as a fellow with a fantastic class of fellows that included, among many other incredibly accomplished people, Vivek Kundra: first Chief Information Officer of the United States. At Berkman, all the fellows are all asked for photos and Vivek apparently sent in his official government portrait.

You are probably familiar with the genre. In the US at least, official government portraits are mostly pictures of men in dark suits, light shirts, and red or blue ties with flags draped blurrily in the background.

Not unaware of the fact that Vivek sat right below me on the alphabetically sorted Berkman fellows page, a small group that included Paul Tagliamonte very familiar with the genre from his work with government photos in Open States decided to create a government portrait of me using the only flag we had on hand late one night.

A friend recently commented on my rather unusual portrait on my (out of date) page on the Berkman website. Here s the story.

I joined Berkman as a fellow with a fantastic class of fellows that included, among many other incredibly accomplished people, Vivek Kundra: first Chief Information Officer of the United States. At Berkman, all the fellows are all asked for photos and Vivek apparently sent in his official government portrait.

You are probably familiar with the genre. In the US at least, official government portraits are mostly pictures of men in dark suits, light shirts, and red or blue ties with flags draped blurrily in the background.

Not unaware of the fact that Vivek sat right below me on the alphabetically sorted Berkman fellows page, a small group that included Paul Tagliamonte very familiar with the genre from his work with government photos in Open States decided to create a government portrait of me using the only flag we had on hand late one night.

The result shown in the screenshot above and in the WayBack Machine was almost entirely unnoticed (at least to my knowledge) but was hopefully appreciated by those who did see it.

The result shown in the screenshot above and in the WayBack Machine was almost entirely unnoticed (at least to my knowledge) but was hopefully appreciated by those who did see it.

Stefano Zacchiroli opened

Stefano Zacchiroli opened

here's the list of RC bugs I've worked on during the last week.

here's the list of RC bugs I've worked on during the last week.